It’s review and movie time.

After a number of smaller LinkedIn and Twitter updates, we thought we’d take to the blog to announce two really important pieces of news.

(more…)

After a number of smaller LinkedIn and Twitter updates, we thought we’d take to the blog to announce two really important pieces of news.

(more…)

First things first: We’re fully aware that XR4DRAMA should have been a wrap by the end of 2022 (at the latest) – and that it’s January 2023 now. The reason for the delay was, of course, the Corona pandemic. And all the challenges that came with it. There were things we simply couldn’t do for months and months, and thus we couldn’t finish on time. We’re not ashamed. And luckily, the EC granted us an extension. The good news: We’re absolutely on track to meet the new XR4DRAMA deadline, which is April 30th. Let’s take a look at what will (have to) happen until then.

(more…)

Before we can slowly get into XR Xmas mode, we have a rather busy week ahead of us: CW 48 will be packed with a full consortium meeting and a showcase at yet another big conference.

(more…)

Sometimes, when tech and use case partners work together really well, prototypes end up with a couple of neat features that weren’t even listed in the original requirements docs. A good example for this is the current version of the XR4DRAMA main mobile application: up2metric and DW closely cooperated to augment the XR features.

(more…)

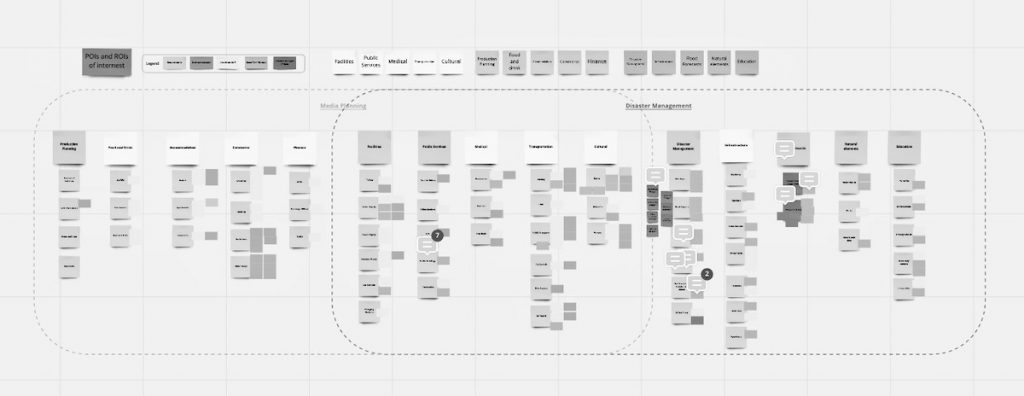

In yet another look behind the scenes, we explain how we manage to augment the XR4DRAMA maps with more sophisticated 3D models.

(more…)

The consortium is on the road again. Next month, we’re going to Brussels to be part of stereopsia, one of Europe’s (and the world’s) leading conferences on immersive tech and media.

(more…)

Here’s another little peek behind the scenes:

(more…)

When the consortium is in the mood for meetings, it’s in the mood for meetings. Less than two weeks after our media production pilot and the first plenary on Corfu, we got together yet again. On May 17th, we met in the “Sala Chiesa” at the beautiful, historic Palazzo Trissino al Corso, located in the center of Vicenza. Our goal this time: Do an XR4DRAMA risk/disaster prevention and management test run, led by consortium member AAWA and their local partners, the emergency control crew of Vicenza municipality.

(more…)

So this actually happened: After 17 months of working remotely with seven teams based in four countries, everybody hopped into a car, a bus, onto a train, a ferry, a plane – and went to Corfu. Where we had a much needed all-hands workshop and consortium meeting, at the Ariti Hotel in Kanoni, in the first week of May, 2022. We still have to pinch ourselves. Now what’s going on in terms of prototype development, project management and project exploitation will be subject of a number of upcoming deliverables. This post instead summarizes the activities during our first “real” get-together and DW’s media pilot test run, which was at the center of it all. We also have a couple of nice black & white shots for you.

(more…)

In this series of blog interviews, we further introduce the people and organizations behind XR4DRAMA by asking them about their work and their particular interest in the project. Our seventh and last interview partner is Christos Stentoumis, co-founder of the Athens-based IT engineering company up2metric.

(more…)